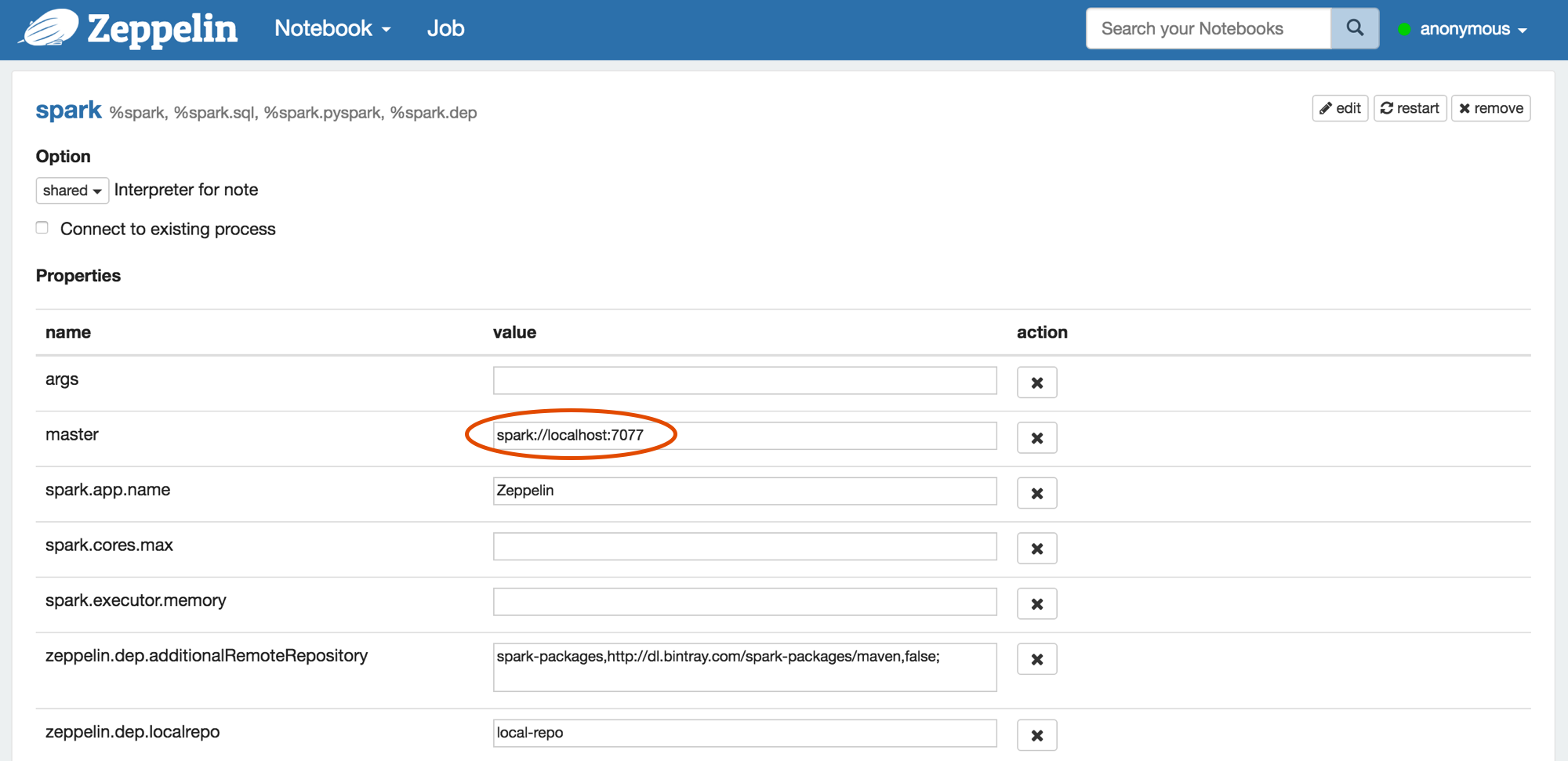

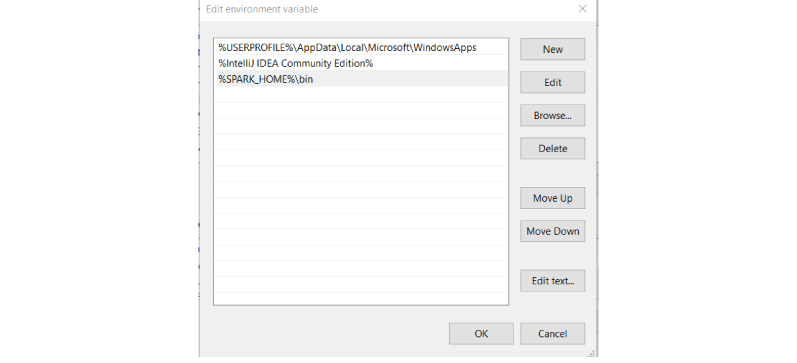

I recorded a video to help them promote it, but I also learned a lot in the process, relating to how databases can be used in Spark. Choose a Spark release: 3.2.1 (Jan 26 2022) 3.1.3 (Feb 18 2022) 3.0.3 (Jun 23 2021) Choose a package type: Pre-built for Apache Hadoop 3.3 and later Pre-built for Apache Hadoop 3.3 and later (Scala 2.13) Pre-built for Apache Hadoop 2.7 Pre-built with user-provided Apache Hadoop Source Code. It also supports Scala, but Python and Java are new. All other SQL operators, like order by or group by are computed in the Spark executor. The MapR-DB Connector for Apache Spark MapR just released Python and Java support for their MapR-DB connector for Spark. When you select columns and use the SQL where clause to select rows in a table, those operations get executed on the database. These are characteristics of the database’s Spark connector, not of the database: Without getting into the relative strengths of one database over another, there are a couple of capabilities you should look for when you’re picking a database to use with Spark. Here is a list of database that can be connected to Spark. If you want to use a database to persist a Spark dataframe (or RDD, or Dataset), you need a piece of software the connects that databse to Spark. Spark is written in Scala Programming Language and runs on Java Virtual Machine. First thing that you want to do is checking whether you meet the prerequisites.

INSTALL APACHE SPARK ON MAPR HOW TO

In this section, you’ll cover some steps that will show you how to get it installed on your pc.

I recorded a video to help them promote it, but I also learned a lot in the process, relating to how databases can be used in Spark. Installing Spark and getting it to work can be a challenge.

INSTALL APACHE SPARK ON MAPR INSTALL

Learn, how to install Apache Spark On Standalone Mode. Often it is the simplest way to run Spark application in a clustered environment. It makes it easy to setup a cluster that Spark itself manages and can run on Linux, Windows, or Mac OSX. It also supports Scala, but Python and Java are new. Standalone mode is a simple cluster manager incorporated with Spark. The file is located in this project: /data/auctiondata. MapR just released Python and Java support for their MapR-DB connector for Spark. Getting Started with Spark on MapR Read Data from MapR-FS 1 - Copy the data into MapR File System For this example we will use a CSV file that contains a list of auctions.